People have a really strong aversion to taking seriously anything that sounds like science fiction. And this is part of why people have been so wrong in the last decade uh about AI progress or let's talk about the big picture here. Humans will no longer be in charge of the planet or at least not by default. It's sort of like building a new competitor species to humanity that is in fact superior in the relevant economic and military ways. The scenario is like my best guess as to how things are going to go and it does in fact end quite poorly for humanity. Uh I hope I am wrong about all this stuff.

I'm just looking at the lens and I'm going to shoot slow motion. Okay. Slow motion. Yeah. All right. My name is Daniel Katello. I run the AI Futurist Project which is a small research nonprofit that focuses on predicting the future of AI. Well, we look at how fast companies have been scaling up uh their compute, their data center, their GPUs, etc. Uh and we project out those trends into the future. For 2 years, 2022 to 2024, I worked at OpenAI also doing forecasting research. AI 2027 is a concrete scenario depicting roughly what I expect the future to look like. The future is very difficult to predict, especially the future of AI.

AIS are getting smarter in a bunch of different ways over the last decade. It seems likely that they're going to continue to get smarter in a bunch of the same ways and also they could become AGI, artificial general intelligence, or even super intelligence, which means an AI system that's better than the best humans at everything while also being uh faster and cheaper. If this were to happen, it would be huge. It would be the biggest thing that's ever happened perhaps and it would have all sorts of profound implications, all sorts of crazy risks that we would have to deal with. A127 has two endings, the race ending and the slowdown ending. In the race ending, uh, they continue racing.

They don't make any of these trade-offs described, and they end up with AI systems that are broadly super intelligent, but which are not actually aligned, uh, not actually loyal, controlled, etc. And that is the sort of uh nightmare outcome that many people have been warning about for more than a decade now. In the slowdown ending, they do basically unplug their most advanced AIs and then they rebuild using a weaker but safer AI design. Um, and then that's how they're able to uh solve the technical problems and uh build a amazing utopia for humans. If you hear people talk about how AI could lead to the extinction of the human race, it's basically the sort of classic story of uh the successor species displaces us

and it's not loyal to us. It's not, you know, it doesn't actually care about us that much and so it does to us what we do to the rainforest, right? That's a very serious risk that I think is on the horizon that we're headed towards. And then once you have that sort of very robust, self-sufficient, extremely advanced, super intelligent designed robot economy, now you're in the situation where you've basically created a successor species that is capable of out competing uh humanity. It's fully autonomous. It's fully self-sufficient. It doesn't need humans anymore for anything. And they're also being integrated into the military. They're designing all sorts of wonderful new weapons and drones. They're being put in

charge of command and control networks. Human soldiers are being told to listen to their orders in case of possible future war because their orders are going to be better than the orders from human generals and so forth. So put yourself in the shoes of someone there and then think okay how do we unplug all this? Then there's this period of aggressive deployment where you know AI is being integrated into everything but it's not AI like it is today where it's this sort of weak and fallible chatbot. We're talking the army of super intelligences is being integrated into everything which means it's better at doing the integration than any human would be and it's you know in some sense

basically calling all of the shots and integrating itself into things and I think that uh a lot needs to change if we are to avoid this. I'm saying that this is like the default outcome if we stay the course. I'm not saying like this is like a small possibility of happening but we should be worried about it anyway. I'm saying like actually this is the default outcome and if we want to make it like less than 1% chance that something like this happens, we would need a quite significant change in course from our current course. I think that it's important for the world to basically wake up and realize what's happening before it's too late because the current trajectory is unacceptable.

I think a lot of people perhaps taking cues from science fiction tend to think that the integration of AIS into the economy will be this relatively gradual and continuous thing where you know first the AIS get good at what you might call like low skill tasks and then they get good at like medium skill tasks and they're sort of gradually automating the economy sector by sector until eventually at long last they're able to automate everything and the whole economy is automated. That's not how I think it's going to go because of the way in which AI research will be one of the first things to be automated and once it's automated you can get to super intelligence relatively quickly rather

than sort of gradually building and training AI systems to automate this profession or this aspect of this profession. It'll sort of come smashing through all at once where the economy will look mostly like it does today until someone has the army of super intelligences on their data centers and then the army of super intelligences on the data centers. The world will be their oyster. They'll be able to automate any section of the economy that they want to relatively rapidly. I think there'll be basically two phases. Phase one will look like what we see today where human beings in corporations and research programs are going to be designing and training specific AI systems to do particular tasks such as you know diagnose the

disease based on um these images from the scans. They're like similar to today's AIS that know a lot of stuff but don't know everything and are you know limited in various ways. Then in phase two, you have the army of super intelligences that are better than the best humans at everything. They learn faster. They are better at making plans, better at running businesses, better at politics, way better at research. And in that phase, I think that the automation of the economy will go much faster. It will not feel like tools. It will feel more like a new species coming in that's just better than us at everything and is like ordering us around and telling us how to do things and we're doing it because it's working

because the orders are in fact causing things to go much faster and be much better. The human governments are collaborating with it and allowing it to take control of factories and take control of all sorts of things because it's just so much better at doing everything than humans are. your industry probably will not be disrupted by AI in phase one. Uh and then in phase two, your industry will just become irrelevant. Uh and AI will go do its own thing much better than you and you will probably get a new job providing training data or something like that or carrying out experiments under the direction of the super intelligences. Um but you won't be running the show anymore. um and the show will look quite

different from how it currently looks. AI is a very broad term. It used to describe um basically any sort of artificial cognitive machine. If you go far enough back in time, your calculator would have been AI. The definition of AI has sort of narrowed over time. When things become routine, they generally stop being called AI. and AI is reserved for like the new thing that's doing things in an exciting different way. The human brain can't be copied. At least we don't have the technology for that yet. There's just one brain and it has to like learn on its own. Whereas with the AIs, you make a ton of copies, they go do stuff and then all the learnings are sort of like merged. These

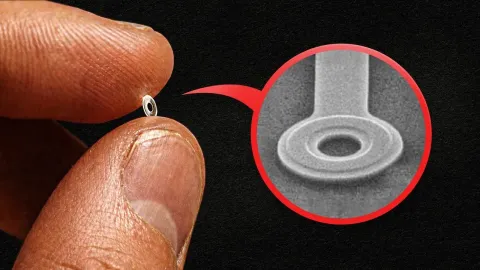

days AI tends to mean artificial neural networks, especially big ones and especially ones that are built off of language models so that they have a sort of general understanding of the world. The core way that they're created is by they start off random connections and then you have some training environment or some series of training environments uh and you throw them into those environments and then the environment will sort of have them do stuff and then it will automatically grade their performance and then those grades will be used to reinforce and change their circuitry in an automatic way. It's kind of like a survival of the fittest thing happening within that

brain of circuitry that tends to cause the whole brain to get high scores gets strengthened and reinforced and comes to dominate the overall uh behavior. They're kind of like a virtual brain. We should distinguish between does the system behave as if it has a mind, does it, you know, behave as if it has goals? And are those experiences morally valuable in the same way that we think humans experiences and humans are, right? Those are separate questions. There are going to be autonomous AI agents that will be behaving as if they have goals, behaving as if they have desires, you know, behaving as if they have thoughts. And arguably, there

already are these things, right? Like if you go talk to Chat GBT, it sure seems like it has thoughts. And it sure seems like it's able to like go browse the internet and experience stuff on the internet just like you would if you were browsing the internet and then it can come back and tell you about it. You know, there's this big debate about what are the coralates of consciousness. What are the like the physical properties that are associated with consciousness? People disagree about the details, but there's lots of like candidates for like what are the different cognitive properties that give rise to consciousness. And basically all the ones that have been discussed seem like either AIs already

have them or will soon have them. I think there's a serious philosophical and scientific question about whether they have moral status, whether they have experiences, etc. And I think we should be prepared to get an answer that might be kind of uncomfortable for us such as, yeah, they probably are having experiences, they probably do have some moral status, we do owe them a decent life. AGI stands for artificial general intelligence and the general there is the key word. It means that it can do everything in some sense. It's a AI system that has a huge broad range of skills, can do a whole bunch of different jobs, you know, can be a sort of autonomous agent. And then super intelligence is sort of like the more

extreme version of AGI. And the idea there is like basically whereas AGI is often thought of as like it's extremely broad, it can do lots of things, but maybe it still has some limitations, super intelligent is like no, it's better than the best humans at everything that matters while also being faster and cheaper. It would look like you're talking to a doctor except the doctor is on the computer and it's an AI and it's a really good doctor and it's better than the best doctors. If you look at the benchmarks and if you chat with the models, they have quite an impressive general world knowledge and quite an impressive specific knowledge about different fields. It's it's almost as if they've read every textbook ever

and taken every exam ever and every course ever. So they have a lot of book learning about basically everything. But what they lack is ability to actually get stuff done on their own. Claude is as uh the latest AI system produced by Anthropic. It's fascinating to watch. It's it can play Pokemon, but it is worse than humans at playing Pokemon. It tends to like forget its own position and go in circles, for example, or it tends to like keep trying strategies that aren't very good. However, the AIs are getting better at this sort of thing. Every couple months, the AIS get better at operating autonomously as agents um without human intervention. A decade ago when all these AI companies were training AIs to

crush these various games like chess and go and so forth, they specifically trained the AI to play that game. The exciting thing about Pokémon and Claude is that Claude was not trained to play Pokémon at all. So, it's completely different ball game. It's not that Pokémon is inherently more difficult than other games that AIs have beaten. It's that this AI is able to play this game without ever having trained in this environment. So it's it's able to do it purely by generalization and the reason why that matters is because you know the dream of AGI is a system that can do everything and that basically means it can do many things without having been trained on it. So currently the AIS are

not really very agentic. They don't really operate autonomously continuously in pursuit of goals. Instead they just sort of output a paragraph or two of text in response to your question. But in the future, we'll have AI agents that operate continuously and autonomously and that are more like employees where you give them some big picture instructions and then they just like churn away in the background working on those. So first milestone is the AI employee that can automate coding. Second milestone is the AI employee that can automate the entire AI research process. After that, we expect AI research to go much faster than it currently goes. Maybe something like 25 times faster. Then a few months after

that you get the super intelligence. So that's you know the system that's broadly better than the best humans at everything. Even after they run the whole economy they do so in the best interest of the people who control them. Um there's still the political question of well who controls them. Of course there'll probably be the very intense AI race happening internationally. There will be multiple data centers controlled by different companies, some of which are in different countries such as the US and China. They will all be racing each other to get better and better AIs and to automate the research and so forth. I think that this race pressure will cause the leaders of these countries and the leaders of these

companies to aggressively deploy their super intelligences into the economy and also into the military and so forth. The end result of this is a world where super intelligence has basically run the show on both sides of the Pacific. They've been integrated into the military. They have been allowed to design and set up their own factories producing all sorts of new machinery and robots. Eventually, you get this entirely self-sufficient economy that is designed and run by super intelligences. They could totally unplug all their AIs, but they don't want to because why would you? I mean, they need to beat each other and like it's making so much money and so forth and it's not even

that dangerous. And then you could ask then again, maybe you could unplug it there. Although in that case, unplugging would be a lot more difficult, especially if the AIs resist since they have all these autonomous factories and weapons and robots and things like that. But also even then like how would you convince the government to suddenly turn on 180 degrees and unplug all this wonderful new machinery that they just built, you know, to beat China? It really wants to avoid China winning and China beating them in a war or in economic competition. A military that doesn't have super intelligence in charge uh would be um outco competed by a military that does. So, I don't

think that like banning AI from being in the military matters that much. Actually, AIs will be placed in charge of important military decisions eventually. Not now, not in the next few years, but after they're super intelligent. Uh I do expect that to be what these governments go for. Why? Because if you don't do that, you might be out competed by a rival government that did. You could imagine a sort of alternate version of AI 2027 where both the US and China signed a treaty early on to never let AI touch anything related to the military, but the story would still look quite the same. There'd still be this explosion into the economy. There'd be this massive transformation, robot factories building, you know, more machines which

build more factories and so forth. And then after a few years, effectively the whole economy would be run by super intelligences and they would be able to use a combination of political maneuvering and illicit military development to get hard power when they need it. AI progress has continuously surprised most people including most experts in the field uh with how fast it's been. The point to intervene is basically before the AIS get that smart and before they're integrated into everything. The longer you wait, the more costly it is to do the unplugging and also the less likely it is that you can even

succeed. It's trivial to unplug them before they're actually AGI. You could unplug them right now and it would only be minor economic damage. You could unplug them next year and it would be larger economic damage, but still relatively minor. But at some point uh the economic damage would be severe once they're basically running the economy. And then also at some point they just wouldn't let you unplug them. Read AI 2027. Go to the part where it's, you know, middle of 2028 where the AIS have been given special economic zones and they're managing huge workforces of human employees building new types of factories, new types of mines, producing new types of robots.

They're steering and controlling the robots to build all sorts of new machinery and so forth. And moreover, by this point, the super intelligences are embedded in everything and or in many things and they're better than you at politics. In fact, they're better than everyone at politics. So, they're better than you at lobbying. All the cards are stacked against you. Basically, if you wait too long, if you wait till 2029 or so like that, then they just you just literally don't have the physical ability to win a war against the super intelligent economy. And so if the government moved to unplug it all, then the robots would just fight a war and win. After super intelligence is built, then

humans will no longer be in charge of the planet, or at least not by default. This is the transition point where humans go from being the dominant species on the planet to being, you know, pets or retirees or possibly just eliminated entirely and replaced by this new species. The hope is that we can make this new artificial species to be um loyal/obedient to humans. Aligned is the term that people would often use, which means they have the goals that we wanted them to have. they have the they follow the rules that we wanted them to follow, right? They obey us. Um, and it's a sort of open secret, but we don't really have a good uh plan for how to do this yet.

We don't yet have super intelligence, which makes it harder to study how do we control it, how do we align it, how do we make it safe. Instead, we have these earlier, much weaker AI systems that might not even be using the same paradigm as super intelligence. We can do experiments on them and we can try to design uh techniques for controlling them and aligning them and then we have to sort of hope that those techniques will still continue to work on much more powerful and intelligent AI systems in the future. But we can't just sort of open up their code and see what goals they ended up learning as a result of that process. Uh because they just don't work that way. They don't have a

bunch of code. they have a bunch of neurons or artificial parameters. That's that's one of the core reasons why this is tough is that, you know, we would like to build AI systems when they become smarter than us. We'd like to make sure that they reliably pursue all and only the goals that we wanted them to pursue and follow the rules that we wanted them to follow. And that they do this even when they can't get caught, right? Even when they're in a position of power and responsibility, progress is being made on it. There's interpretability research which is attempting to piece apart the different circuitries that the AIs have learned uh that make up their artificial brain and understand what those circuits

are doing. Uh that's very promising because if we made a lot of progress in interpretability, then what I just said would not be true anymore and we could actually just go open up their brain and find out what they're thinking. But we do not have a reliable way to control AGI or super intelligence. In fact, we don't even have a reliable way to control current AI systems as evidenced by the fact that they often lie to users despite being trained not to lie. We just don't know what or how they're thinking. That's all just the loss of control angle. That's the like this looming risk of loss of control on the horizon. How do we stay in charge of the army of super intelligences once we have them? These companies are

focusing on winning and beating each other and they are sort of crossing their fingers and planning to deal with these issues later as they come up and they are not getting ready to actually deal with these issues later as they come up. People want to believe that they're the good guys. People want to believe that what they're doing is reasonable and justified. And if what you're doing is working as hard as you can to build more powerful AIs so that you can beat your competitors, well then you want to believe that's good and that you're justified in doing that. The US government has regulated many things in the past. It's even banned many things in the past. So I think that it's

totally within the US government's power to end this crazy race to super intelligence between AI companies or at least to like put guard rails around it so that uh they proceed with the appropriate caution and do the relevant sort of research and have to you know actually make it safe before it's too late. I also think that countries have collaborated and made treaties and come to understandings in the past on things such as nuclear weapons, sometimes on climate change, right? So, so I do think it's possible to uh to make this go well and you know that's what I'll be advocating for.

There's this concentration of power angle which is uh who controls the army of super intelligences and what do they do with them? Currently, we're on a path to have either a single corporation headed by a CEO or um you know the president who was democratically elected but is still just one person and so if they have all the power then they're still a dictator, you know. So we need to have some sort of more like checks and balances type system where a whole group of different people who represent different parts of society all have a say in what goals the AIs are given, what orders the army of super intelligence is given and so forth. I think we have a lot of changes that we need to make if we are to achieve a

democratic outcome from this. Um, I do not think it is uh acceptably democratic for the answer to the question to be uh well the CEO of whichever company built them first controls them. I think even if the government gets involved, I think that the executive branch is the most likely branch to sort of wake up the soonest and know the most about what's going on. To put it shortly, if the army of super intelligence is just completely controlled by a single man, even if that man was democratically elected, then I think that we're not really a democracy anymore. If we want to stay a democracy, we need to have checks and balances over what goals the super intelligences can be given and what uses they can be put to and who

gets to see what they're up to, for example. So, I would like to see a world where Congress and the judiciary and these other like parts of the government have a say in how this is developed and who's doing what with the army of super intelligences. Background context for why I left OpenAI. There are these looming threats on the horizon and we are just sort of rushing right into them and OpenAI in particular is not really doing much to avoid them. You know, I don't want to hate on OpenAI too much because I would say similar things about the other companies in the field. I don't think there'd be some sort of liberal democracy with capitalism all happening inside the data center. Instead, presumably, it would be more of a top-

down structure where the CEO and the humans, you know, give orders to a giant bureaucracy of AIS. At the highest level there's this command structure and it's the oversight committee where the buck stops that makes the decisions about you know the biggest questions and also crucially um in order for this to actually work uh the they have to have transparency and visibility into what's going on. So they all get to see what's going on with the AIS. They all get to see the logs of interactions with the army of super intelligences so that none of them can use uh unequal access to the AIS against the others.

You should play around with the chatbots a lot and try using them in your work and just sort of playfully explore what they can do and what they can't. And of course read the literature about the progress they're making on various benchmarks and you know read about the forecasts that AI futures project and other groups have been making and try to get prepared for what's coming. That would be my advice for phase 1 which is the phase we're currently in. Society in general is not really ready for what's coming. But it's more important that the transition from pre-aggi systems to super intelligence be a sort of slow gradual transition than that it happen later because serial time to prepare is

valuable but less valuable than time to react to the things that are happening. Time to react is more important than time to prepare. And so we want the transition to be slower even if the transition starts sooner. But the longer we wait to build AGI, the faster the transition will be because more hardware will accumulate in the world. More compute will be produced um by the foundaries and there'll be more uh ability to rapidly scale up small AI systems into larger ones and to have them rapidly do more research and accelerate uh the takeoff. The safest and best thing for humanity is for us to build AI sooner rather than later, even though we're not ready. because if we waited till later um it would come as more of a shock to the system.

That argument was also a popular argument. It was an example of an argument that people would sometimes use to explain uh why we're doing what we're doing at OpenAI. Um it's not so popular now because OpenAI has been trying to accelerate the production of chip capacity worldwide. So they're trying to make there be more hardware in the world which you know would make the transition happen faster as well. There's this sort of dynamo of uh the companies have more success, more powerful AIs which make more money and impress more investors which causes them to get more money which they use to buy more compute and train bigger AIs which are then more powerful because they're bigger and then they get even more users and so forth.

And that's been the story of the last 5 years and arguably that's been the story of the last 10 years. There'll be this trade-off that the companies will face between uh doing the thing that's fastest and most powerful and cheapest and doing implementing all of these complicated schemes that make it safer but probably will come at some cost. These companies are explicitly trying to build super intelligence and they think that they have a good chance of succeeding before this decade is out. Some of them would even say they're probably going to succeed in the next few years. This is a big deal. I think what's going on, ironically, the reason why people aren't um running around panicking is because people don't

actually believe that these companies are telling the truth when they say this. Uh if people actually believed that one or more of these companies was going to build super intelligence in the next few years, uh that would be incredibly disruptive and p you there'd be people running around in the streets screaming, right? Part of why I like this methodology of scenarios is that one of the advantages of doing specific scenarios is that it causes you to think of questions that you weren't thinking about before. Forcing yourself to write down the story uh forces you to notice when some of the ideas that you had previously had are actually in conflict and you didn't realize it yet because you never sort of put them

together. One way we can prevent that from happening is by requiring transparency about the spec, the intended goals and behaviors of the AIS. No hidden agendas. Everything must be uh available for the public to see what goals and values the AIS are being given. Companies should be required to keep the public informed about the capabilities that they're developing and the performance on various evals and benchmarks that they're seeing including dangerous capabilities not just the exciting commercially valuable ones but also the dangerous capabilities.

Companies should be transparent about what goals principles etc they are attempting to train into the models. These are written documents that describe the intended goals, principles, etc. of their AIS. So that should be public and there shouldn't be like secret clauses in it that are hidden from the public. We really want to avoid a world where AI is being integrated into everything. And also, you know, the AIs are pursuing a hidden agenda following the orders of the CEO of the company, right? That would be terrible. um that's that's a recipe for a literal uh AI dictatorship happening. Then safety cases. So once you've got your spec and you're required to make it public, then you should have some sort

of written document that explains why you think your AIS are actually going to follow the spec. But ultimately, I think there needs to be a industry-wide requirement rather than uh simply relying on the company's goodwill. I don't think it's hopeless. I think that the technical alignment problems are solvable. We just need to devote the right amount of effort to them and proceed with the appropriate amount of caution. Um, I think that's totally possible and it's a question of just getting the political will and then having uh the right people with the right expertise draft the actual language instead of what often happens where the actual result is um a mixture of incompetence from people who don't understand the technology and lobbying

from corporations who have a conflict of interest there. It's a constant back and forth between like good news and bad news, right? Countries have nationalized industries before. Even the US has. That's not completely unprecedented either. Is it going to happen? I mean, probably not. Uh I told you the race ending is the most likely outcome, I think. So, yeah, I agree. This is difficult to make happen in the political environment that we're in and given the race conditions that we have. But it's not impossible. There's some continued progress in various um uh technical alignment fields. There's actually been a lot of like very vivid and obvious alignment failures which I think are actually good news because

they help people like wake up and pay more attention to the problem and also because they give us stuff that we can study to make scientific progress on fixing the problem. Recently, OpenAI published a paper where they described how they found their AIS hacking the training process. And rather than completing the tasks straightforwardly as instructed, they were basically cheating their way through some of the tasks. And they knew they were cheating. And it's great that we have those examples already because it means that we have several years to study that phenomenon and try to fix it uh before it's too late and before we really have to have a working robust solution. I mean, there's a lot to be worried about. Um, I try not to let it

get to me that much. I think that I've been thinking about this stuff for years and um, so I've sort of learned to live with it, if that makes sense. I do still have nightmares every once in a while. Mostly I think this I've like learned to like be chill about all of this. Uh, I hope I'm wrong about all this stuff.